Explanations are a Means to an End: Decision Theoretic Explanation Evaluation

International Conference on Machine Learning (ICML) 2026Abstract

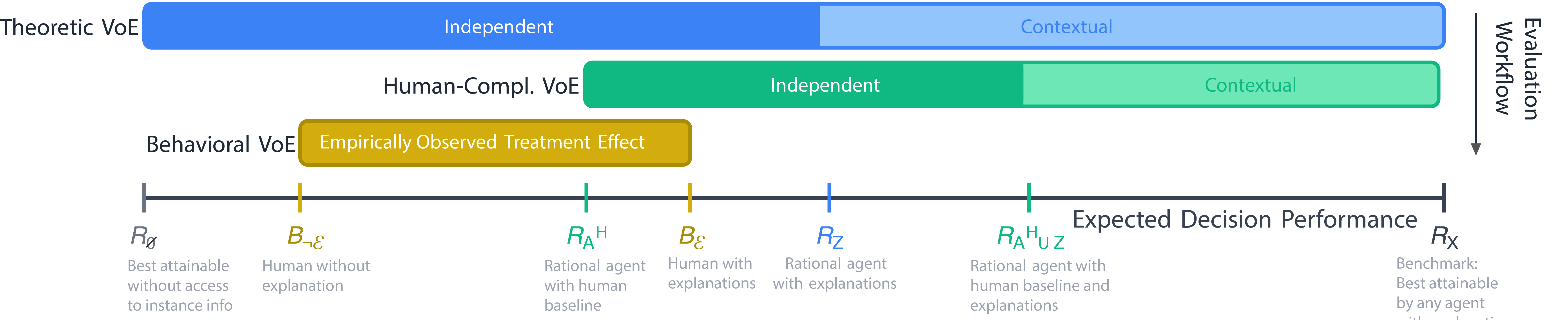

Explanations of model behavior are commonly evaluated via proxy properties weakly tied to the purposes explanations serve in practice. We contribute a decision theoretic framework that treats explanations as information signals valued by the expected improvement they enable on a specified decision task. This approach yields three distinct estimands: 1) a theoretical benchmark that upperbounds achievable performance by any agent with the explanation, 2) a human-complementary value that quantifies the theoretically attainable value that is not already captured by a baseline human decision policy, and 3) a behavioral value representing the causal effect of providing the explanation to human decision-makers. We instantiate these definitions in a practical validation workflow, and apply them to assess explanation potential and interpret behavioral effects in human-AI decision support and mechanistic interpretability.

Citation

BibTeX

@inproceedings{guo2026explanations,

title={Explanations are a Means to an End: Decision Theoretic Explanation Evaluation},

author={Guo, Ziyang and Ustun, Berk and Hullman, Jessica},

booktitle={Proceedings of the 43rd International Conference on Machine Learning},

year={2026}

}